Goal

I want to create an artifact available from a private repository (Git from dev.azure.com in this case) that will set the timezone inside the VM.Getting started

First, let's use a section in the Azure portal that is very useful; the Get Started section. In the portal navigate to your DevTest Lab (1), and select the Getting Started option from the left menu bar (2). In this new bar scroll down to the Lear more area and select Sample artifacts and scripts (3).That will open the DevTestLab artifacts, scripts and samples project from Azure on Github. Open the folder Artifact, to see the list of all the usual artifacts you find in the public repo that is available by default in the portal.

Notice how all artifacts are in their own folder. When you create a new artifact, you can always come here and pick something similar to what you are trying to do. This way, you won't start from scratch. Let's open windows-vsts-download-and-run-script. An artifact is defined in the file

Artifactfile.json. This file is mandatory and cannot be renamed. You can put scripts, images, or anything else you need inside this folder.Open the

Artifactfile.json file and have a look.

As you can see it's a simple JSON file. In the section (A) you will define the title, description, publisher, OS and the Icon. Note that the Icon must be accessible publicly, it could be on github, a blob storage or on a website. Section (B) is to define all the parameters you may need to install your artifact on the VM. Finally In (C) it's the command to execute.

Create the Artifact

Here is the JSON for our windows-Set-TimeZone artifact. A made it very static by not passing any parameter, but in a reel situation, a timezone parameter would be better.Artifactfile.json

{

"$schema": "https://raw.githubusercontent.com/Azure/azure-devtestlab/master/schemas/2016-11-28/dtlArtifacts.json",

"title": "Set TimeZone to Eastern Standard Time",

"description": "Execute tzutil command on the VM set set the Time Zone",

"publisher": "FBoucher",

"tags": [

"PowerShell"

],

"iconUri": "https://raw.githubusercontent.com/Azure/azure-devtestlab/master/Artifacts/windows-run-powershell/powershell.png",

"targetOsType": "Windows",

"parameters": { },

"runCommand": {

"commandToExecute": "tzutil.exe /s \"Eastern Standard Time\""

}

}Create an artifact repository

For this post, I'm using Git from Azure Devops (dev.azure.com) previously named VSTS, but any private repository should works. If it's not already done create a project and go to the Repos section. Create a root folder namedArtifacts or something else if you prefer. Then add a new folder for your artifact. To follow the best practices you should start with the name of your artifact by the name of the targeted OS; in my case windows-Set-TimeZone. Now add the file Artifactfile.json defined previously. Note the url of the repository, it should be easy to get it by click on the Clone button that is on the top right of the screen.

Add repository to DevTest Lab

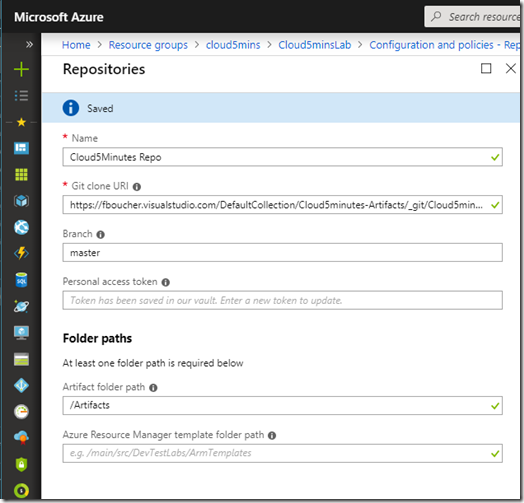

Now we need to add this repository to our Devtest Labs. From the portal.azure.com, open the blade of your lab. From the left panel, click on Repository, then click the Add button.

It's time to use the information noted previously. This is about the Repository, not the artifact.

Use the Artifact

The only thing left is to use our artifact. You can find it while creating a VM or a formula. When you have parameters define in yourArtifactfile.json, the parameters will be listed in a form completly a the left.And if you try it, you will see that the time match the desired timezone. Here my PC is set to display with a format of 24H put it's the same... yep I'm in Eastern Standard Time.

Add it to an ARM template

Doing it with the nice interface is good when you are learning. However, we all know that no DevOps will do that manually every time. So let's add our Repository to our ARM template. If you need more detail on the deployment method, I explain it in a previous post How to be efficient with our Azure Devtest Lab deployments.When you don't know the type or the structure of a resource, you can always go in the Resource Explorer (resources.azure.com) there will be able to find your resource and see how it's defined.

So for this post our

artifactsources will look like this:{

"properties": {

"displayName": "Cloud5mins",

"uri": "https://fboucher.visualstudio.com/DefaultCollection/Cloud5minsArtifacts/_git/Cloud5minsArtifacts",

"sourceType": "VsoGit",

"folderPath": "/Artifacts",

"armTemplateFolderPath": "",

"branchRef": "master",

"securityToken": "xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx",

"status": "Enabled"

},

"name": "Cloud5minsRepo",

"type": "Microsoft.DevTestLab/labs/artifactsources"

}artifactsources goes in the Resources list inside the Devtest Labs. In an ARM template you have the main node Resources (A), then you will have the Lab node (B). Inside this node, you should see second resources list (C), where the Virtual Network is defined. The

artifactsources should go there.Then when you declare your formula, you just need to reference this repository, exactly like the public one.